Experimenting Hive with Cloudera Quickstart VM.

Overview of MapReduce: Explaining WordCount Example

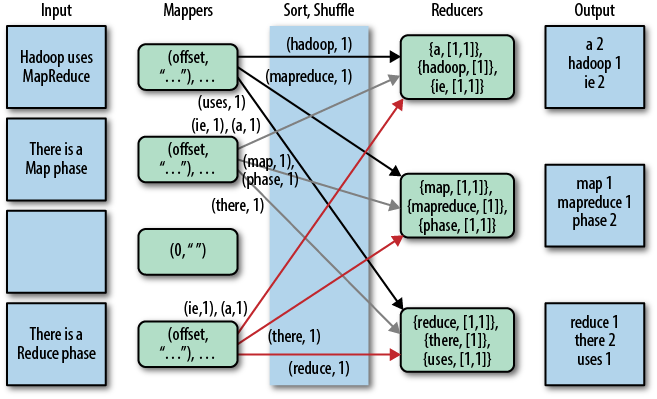

MapReduce is a programming framework that decomposes large data processing jobs into individual tasks that can be executed in parallel across a cluster of servers. The name MapReduce comes from the fact that there are two fundamental data transformation operations: map and reduce. These MapReduce operations would be more clear if we walk through a simple example, such as WordCount in my last post. The process flow of the WordCount example is shown below:

WordCount Example in Cloudera Quickstart VM

WordCount is the Hadoop equivalent of “Hello World” example program. When you first start learning a new language or framework, you would want to run and look into some “Hello World” example to get a feel of the new development environment. Your first few programs in those new languages or frameworks are probably extended from those basic “Hello World” examples.

Most Hadoop tutorials are quite overwhelming in text, but provide little guide on practical hands-on experiments (such as this). Although they are good and thorough tutorials, many new Hadoop users may be lost midway after walls of texts.

The purpose of this post is to help new users dive into Hadoop more easily. After reading this, you should be able to:

- Get started with a simple, local Hadoop sandbox for hands-on experiments.

- Perform some simple tasks in HDFS.

- Run the most basic example program WordCount, using your own input data.

An Old Email

I found this email below (names redacted) in an old document folder. It is probably one of the most memorable emails I have ever written. It gave me many significant lessons and insight, especially when I’m relatively early in my job/career:

- Code that is currently correct may not be robust to changes. Watch out for changes, which are frequent in any software project.

- A small change in implementation approach can significantly improve testability of your code.

- Developers and test engineers should NOT be siloed into different departments in any company. They should work closely together, as programmers having different roles (develop vs. test) in a project (hint: Agile). An analogy is forwards/defenders in a soccer match: they are all soccer players, with different roles.

- Organizational boundaries only dampen open collaboration only if people let them (or abuse them). Send emails, or walk to the other building if needed, to work closely with your project team members.

Pushing Local Jar File Into Your Local Maven (M2) Repository

Problem:

I want to use Vertica JDBC driver in my Eclipse Maven project. I have the jar file from the vendor (i.e., downloaded from HP-Vertica support website) but, obviously, that file is not in Maven central repository. My Maven build will not work without that dependency.

This post will also apply if you are behind a firewall and/or do not have external access for some reason.

Automated Performance Logging and Plotting for Cassandra

In this mini-project, I created a Python script (PerformanceLog.py) to record JMX values from a running Cassandra instance, using JMXTerm (http://wiki.cyclopsgroup.org/jmxterm/), and do the following:

- Put the records into a Cassandra table.

- Plot the results.

The project is based on a Cassandra interview question found on Glassdoor.

Currently, the first version only works with Windows version of Cassandra (using DataStax Community installer). Developed and tested in Python 2.7.

Curl Cookbook

This blog lists some recipes for curl command.